Quest Active: Where Game Studios Are With AI

Five stages of AI adoption in game studios

Many game studios are grappling with AI adoption in some form. Some are actively building it into their pipelines. Some are running cautious experiments. Some are ignoring it entirely. And within any given studio, different departments are often at completely different points.

What’s missing from most of the conversation is a clear picture of what the path looks like, where the industry sits on it today, and where it leads.

What the surveys tell us

The GDC 2026 State of the Game Industry survey had 2,300+ respondents. 36% of game industry professionals use generative AI tools. People at game studios specifically reported 30%, while publishing, marketing, and support roles came in at 58%. Upper management sits at 47%, individual contributors at 29%.

What they use it for provides more insight than the top-line number. Brainstorming and research: 81%. Code assistance: 47%. Asset generation: 19%. Procedural generation: 10%. Player-facing features: 5%.

AI usage is highest for thinking and lowest for shipping. Studios are learning what works in low-risk contexts before committing to production workflows, which is a reasonable way to approach any new technology. 78% of companies already have AI policies in place. About 30% of AAA studios are running proprietary AI systems. The foundation for broader adoption is already there.

Sentiment is more complicated. 52% of respondents say generative AI is bad for the games industry, up from 30% last year. But from the survey data and from conversations I had with developers and executives at GDC, adoption is growing and shows no signs of stopping. People can be skeptical and still be adopting. Both things are true at the same time.

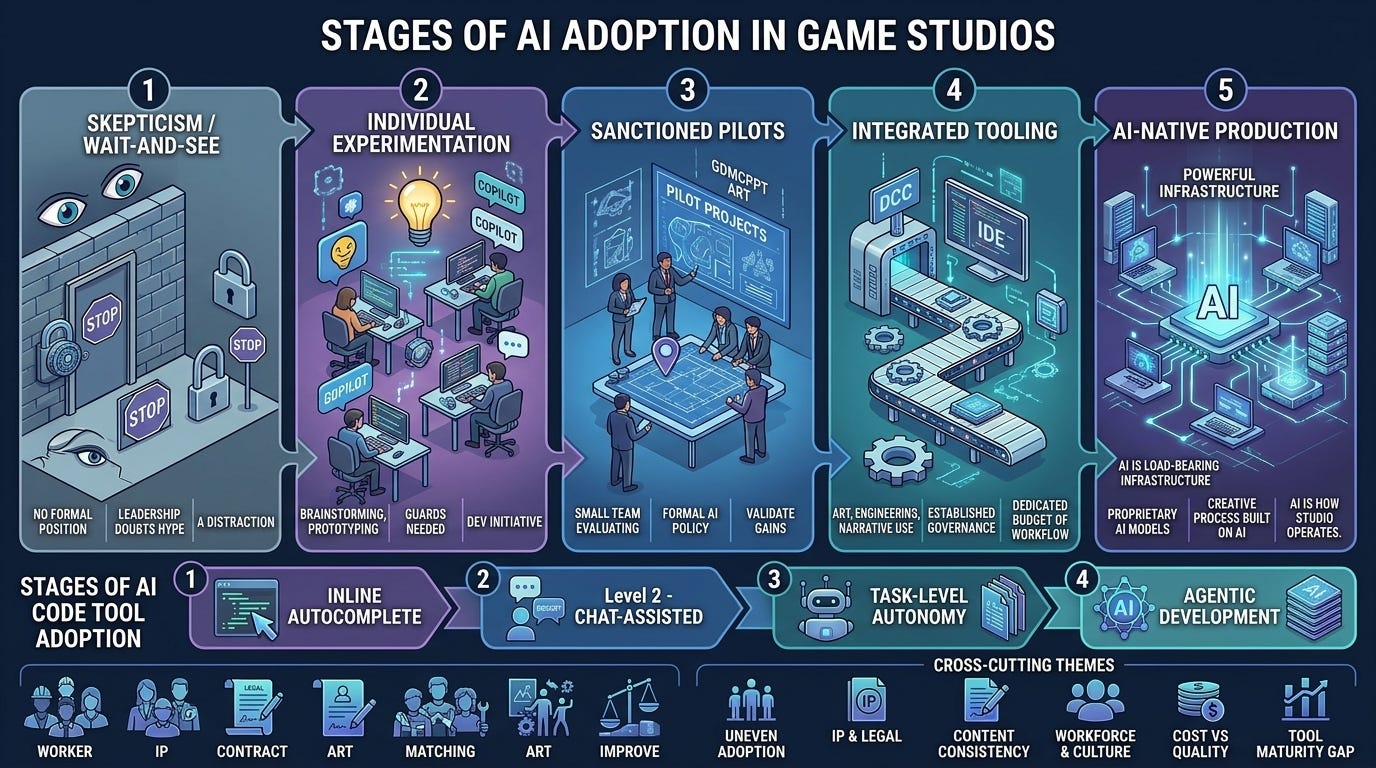

Five stages of studio adoption

Watching how studios actually behave, they cluster into roughly five stages. Most are in Stages 2 and 3 right now, about where you’d expect for an industry in the middle of a technology transition.

Stage 1: Wait and see. “We have real games to ship.” No formal position on AI. For many of them it’s a reasonable posture given their project commitments and risk profile.

Stage 2: Individual experimentation. “Our devs are using ChatGPT on their own.” People tinker on their own initiative. No policy, no budget, inconsistent results. A lot of the brainstorming and code scaffolding usage lives here. People are building intuitions about what AI is good at and where it falls down, which matters more than the output at this stage.

Stage 3: Sanctioned pilots. “We’ve picked some use cases. Let’s see if this works.” Bounded experiments in code generation, concept art, localization, NPC dialogue, automated QA. Small team evaluates tools and reports back. Formal policy. Modest budget. Studios start getting real production data about what AI can actually do for them. The jump from here to Stage 4 is the hardest one, because it requires going from "some people use AI tools" to "AI is in the pipeline," which means governance, integration work, and buy-in from leads who may not have been part of the pilot.

Stage 4: Integrated tooling. “AI is part of how we build games now.” AI embedded in production workflows with governance: approved tools, review processes, dedicated budget, sometimes dedicated headcount. The compounding benefits show up here. Iteration cycles get shorter. Teams explore more ideas before committing. Content pipelines that used to take weeks start taking days.

Stage 5: AI-native development. “We design games around what AI makes possible.” Creative process built on AI from the start. Not just doing existing things faster, but also building experiences that weren’t possible before: games that adapt to how you play them, worlds that respond to individual players, narrative that branches in ways no team could hand-author. Almost nobody is fully here yet. But this is where it’s all headed, and the studios that arrive first will build things the rest of the industry didn’t think were feasible.

Code tools have their own adoption curve

AI adoption doesn’t move through a studio evenly. Engineering almost always leads, and code tooling has its own maturity curve.

Generated code has to compile, pass tests, and survive code review. Those quality gates already exist. Code has a built-in verification loop that art and narrative don’t have yet, making it a natural entry point.

Four levels:

Level 1: Autocomplete. Copilot-style inline suggestions. Accept, modify, or ignore. Feels like better tab-complete. Most studios start here.

Level 2: Chat-assisted. Developer describes a problem, AI generates a solution, developer reviews and integrates. The chunks get bigger: a function or component instead of a line.

Level 3: Task-level autonomy. Developer says “implement this feature” or “write tests for this module” and the AI works across multiple files. The developer shifts from writing code to specifying intent and reviewing output. The productivity multiplier really kicks in at this level. A single engineer can move through tasks that would have taken a team days.

Level 4: Agentic development. AI operates across the codebase with real autonomy. Coordinates multi-file changes, runs its own verification loops. The developer’s job looks more like a tech lead directing work than a programmer writing every line. Small teams start punching way above their weight.

A studio can easily be at Stage 3 overall while its engineering team is at Code Level 3 or 4. Engineering is the beachhead, and the patterns that work there (verification loops, review processes, incremental trust-building) tend to spread to other disciplines over time.

What slows studios down

A few things come up over and over.

IP and legal. The most common concern at every stage. Who owns AI-generated assets? What’s the training data exposure? Legal frameworks are still forming, but studios aren’t waiting for perfect clarity. They’re scoping usage to areas where the risk is manageable and expanding as norms solidify.

Content consistency. Can AI generate an asset that belongs in this game? Style coherence and lore consistency at production scale are hard problems. Studios investing in custom pipelines (style guides, fine-tuned models, human-in-the-loop review) are finding workable answers.

Culture. People have real concerns about how AI changes their work. Studios that handle it well don’t force adoption top-down. They let teams find the value themselves, starting with tools that make people’s existing jobs easier.

Ethics. There are legitimate concerns about how AI models get built. Training data includes copyrighted work, often without permission or compensation to the creators. The energy costs of running large models are real. Studios whose teams care deeply about craft and creative ownership feel these issues personally, and they're not wrong. Some studios treat this as a reason to hold off entirely. Others are looking for ways to adopt AI while supporting the creative ecosystem it draws from, whether that's licensing training data, using models trained on permissioned datasets, or contributing back to the communities whose work made the tools possible. The ethical questions won't stop AI adoption industry-wide, but they'll shape how thoughtful studios approach it.

Tool maturity. Still a gap between demos and production (for example, I generated the infographic in this article using Nano Banana 2, Google’s state of the art image model, and it has some obvious flaws). But that gap closes noticeably every quarter. The tools shipping today are meaningfully better than a year ago, and a year from now the jump will be bigger.

Where this is going

AI does three things for studios at once, and they compound.

Faster iteration. Prototyping that used to take months takes weeks or days. Ideas that would have died in pre-production because they were too expensive to test are now testable. Teams can try five approaches to a mechanic instead of committing to one early and hoping it works. Shorter cycles mean more learning, and more learning means better games.

More with less. A small team with good AI tooling can cover ground that used to require a much larger headcount. Not by replacing people, but by letting each person operate at a higher level. An engineer at Code Level 3 or 4 moves through work that would have taken a team days. An artist with a good AI pipeline can explore ten times more variations before committing to a direction. The constraint on what a studio can build shifts from team size to team taste.

Entirely new experiences. Games where every NPC has a real personality and remembers your interactions. Worlds that generate new content based on how the community plays. Narrative that branches in ways no team could hand-author. These aren’t separate from the speed and leverage benefits. They’re what becomes possible when you have both.

Studios at Stage 4 and 5 are already working on all three. The compounding is the point: faster iteration lets you experiment with new kinds of experiences, and smaller teams can take bigger creative swings because the cost of exploration is lower.

Studios will move through these stages at their own pace, and some may decide the journey isn’t for them right now. But the ones that get to the other side will build the next generation of games, and those games will be different from anything we’ve played before.